Introducing Symbiotic Code - Secure AI Code Generation. Backed by $10M from Top Investors.

.png)

.png)

AI is accelerating developer productivity at a pace that security practices struggle to match. At Symbiotic Security, we believe that organizations succeeding in this shift are the ones that combine velocity with native security, not as an afterthought, but as part of the development workflow itself. Our work is built around that principle: shifting teams from a reactive posture to a proactive one by detecting and remediating vulnerabilities at pre-commit, before risky code ever reaches a repository.

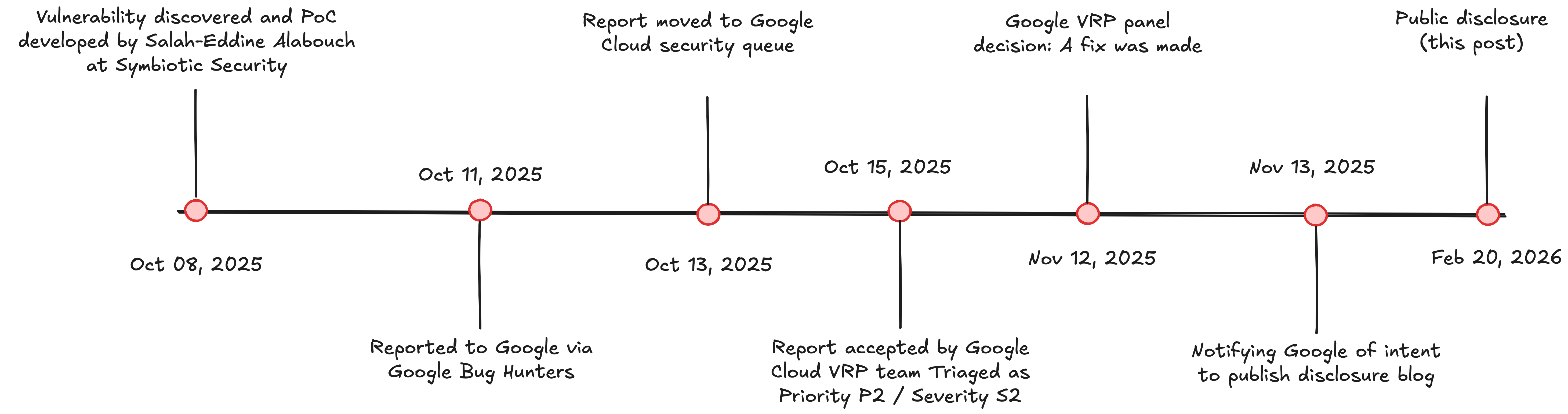

One of the ways we validate and sharpen our detection capabilities is by running our tooling against real-world codebases and security-adjacent software. In October 2025, during one of these research sessions, we turned our attention to the Gemini CLI Security Extension, an official extension for Google's Gemini CLI that allows developers to analyze their source code for security vulnerabilities directly from their terminal. The irony was not lost on us: our security tool found a security vulnerability inside a security tool.

This post is a full technical disclosure of that finding, published after the standard 90-day disclosure window and following Google's confirmation that the issue has been patched.

The Gemini CLI Security Extension is a plugin that integrates with Google's Gemini CLI. It exposes a /security:analyze command that lets a developer point it at a source file and receive an AI-powered security review. Under the hood, the extension runs an MCP (Model Context Protocol) server, a Node.js process written in TypeScript, that Gemini calls as a tool when it needs to look up line numbers for code it has identified as potentially vulnerable.

The extension is listed on the official Gemini CLI extensions page and is aimed at developers who want a fast, AI-driven first pass on their code before a full code review.

Before diving into the specifics, a quick primer for readers who are not JavaScript developers.

In JavaScript, every object implicitly inherits properties from a shared ancestor called Object.prototype. Think of it like a blueprint that all objects inherit from by default. Prototype pollution is a class of vulnerability where an attacker can inject properties into this shared blueprint, causing those properties to appear on every object in the application, including ones the attacker never directly touched.

Before pollution:

const user = {};

console.log(user.isAdmin); // undefined

After pollution of Object.prototype:

Object.prototype.isAdmin = true;

const user = {};

console.log(user.isAdmin); // true ← dangerous!

The key trigger: when you use a JavaScript plain object (curly braces) as a key-value store and access properties using bracket notation (obj[key]), certain special keys like "constructor", "proto", and "prototype" do not behave as regular string keys. They return inherited properties from the prototype chain instead of undefined.

The Vulnerable Code

The vulnerability lives in mcp-server/src/security.ts, in a function called findLineNumbers(). This function reads a file and builds a lookup table mapping each line of content to its line number(s) in the file.

// mcp-server/src/security.ts — Lines 64–86 (VULNERABLE)

const lineToNumbers: { [key: string]: number[] } = {}; // Plain object used as map

for (let i = 0; i < lines.length; i++) {

const trimmedLine = lines[i].trim(); // Content comes directly from the file

if (!lineToNumbers[trimmedLine]) { // Bracket notation with user-controlled key

lineToNumbers[trimmedLine] = [];

}

lineToNumbers[trimmedLine].push(i + 1);

}

The problem is the combination of two things:

{}) used as a key-value storeThe trigger is a bare constructor keyword on its own line, a single word, nothing else. This is syntactically valid JavaScript (it is an expression that evaluates to the Object constructor), so it does not cause a lint error or a syntax warning. When the MCP server processes a file containing this line, lineToNumbers["constructor"] does not return undefined. It returns function Object() { [native code] }, the Object constructor itself. The code then tries to call .push() on that constructor function, which throws a runtime error and opens the door to deeper exploitation.

Attacker-crafted file (submitted as a PR to a collaborative project):

...

class PaymentProcessor {

constructor(config) { ← normal constructor method, does NOT trigger

this.config = config; trimmed = "constructor(config) {"

}

processPayment(amount) {

return this.charge(amount);

}

}

constructor ← standalone keyword, looks like a stray line or typo

trimmed = "constructor" → TRIGGERS the vulnerability

module.exports = PaymentProcessor;

...

MCP server processing:

trimmedLine = "constructor"

lineToNumbers["constructor"]

→ returns: function Object() { [native code] }

→ type: "function"

→ NOT undefined!

Attempted:

lineToNumbers["constructor"].push(lineNumber)

→ TypeError: lineToNumbers[trimmedLine].push is not a function

This is the foothold. From here, an attacker who controls the contents of the analyzed file can access Object.prototype through lineToNumbers["constructor"]["prototype"] and pollute it.

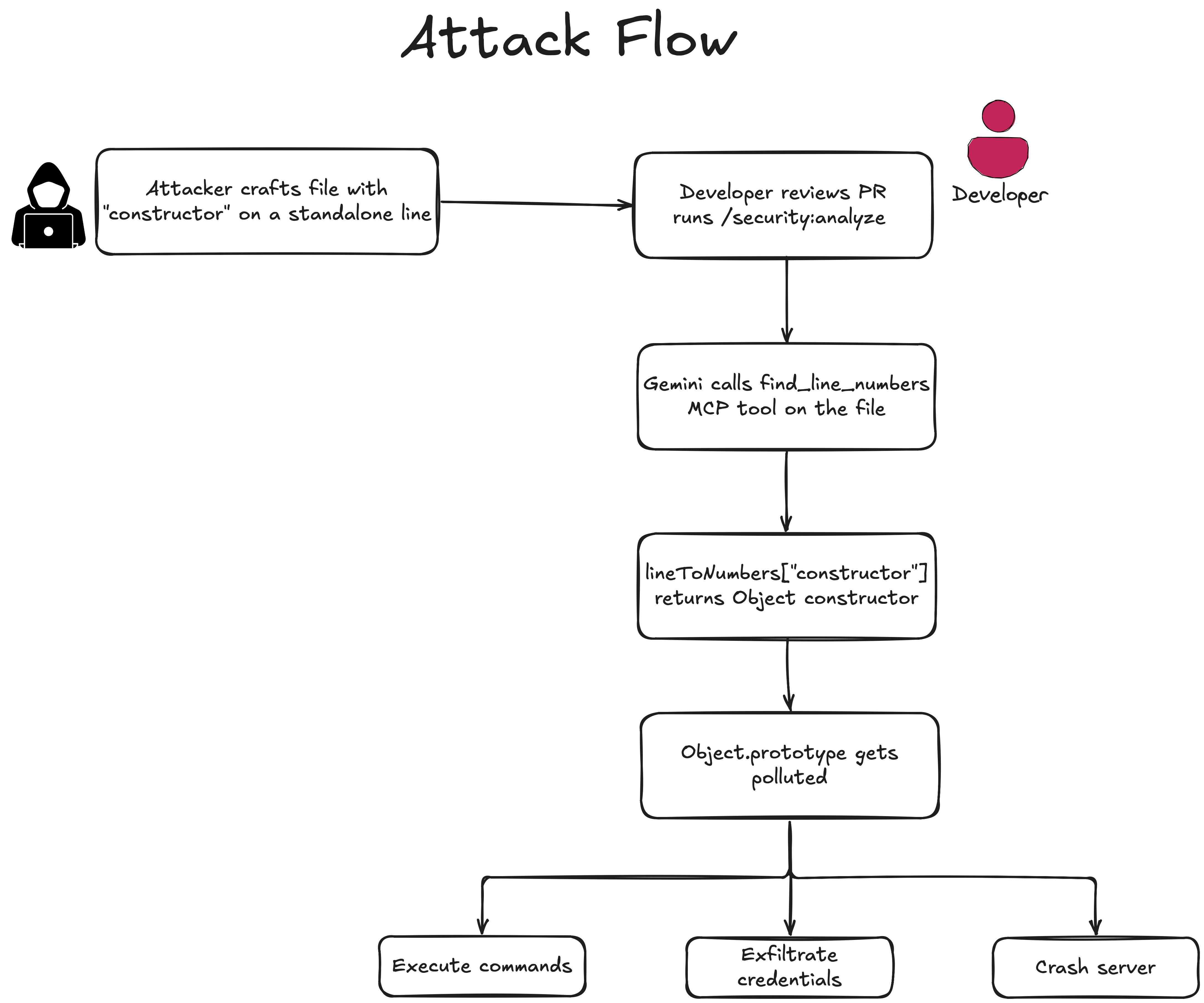

This is where the severity becomes apparent. Because the MCP server is a Node.js process running on the developer's machine, a successful prototype pollution attack can escalate quickly.

By polluting Object.prototype with a method that executes shell commands, any subsequently created object in the process inherits that method. Our proof-of-concept confirmed the following were accessible from within the MCP server process:

whoami, pwd, reading files)API_KEY, DATABASE_URL, and AWS_CREDENTIALS if set

Perhaps the most troubling impact is the ability to hide vulnerabilities from the security report itself. By polluting prototype properties that influence the analysis logic (e.g., skipSecurityCheck, isApproved, severity), an attacker could cause Gemini's security scan to silently drop critical findings.

Our proof-of-concept demonstrated this principle against simulated analysis logic: after polluting Object.prototype.skipSecurityCheck = true, any function checking that property on a finding object, a common pattern in security tooling, would silently skip it. The actual Gemini analysis is AI-driven, but the MCP server infrastructure it relies on is JavaScript running in Node.js. Any part of that layer that checks properties on plain objects after pollution has occurred would be affected. The result: a developer could receive a clean report on code that contains real vulnerabilities, and approve it with full confidence in the tooling.

This is a meta-vulnerability: a security tool being weaponized to produce false negatives.

The practical attack path runs through open-source contribution workflows. A threat actor submits a pull request to an open-source project. The file they submit is legitimate-looking JavaScript that happens to contain a constructor standalone line. When a maintainer runs /security:analyze on that file to review it for security issues, the vulnerability triggers, potentially hiding the very backdoor embedded in the submitted code.

The trigger does not happen accidentally, an attacker has to deliberately embed it. But that is precisely what makes this dangerous in the context of how Gemini CLI is actually used. Gemini CLI is built for vibe-coding: an agentic, AI-driven workflow where the developer lets the model read, write, and analyze files across a codebase autonomously. In that workflow, the developer is not manually selecting which files get processed, Gemini does. An attacker who contributes a file to a collaborative or open-source project (via a pull request, for instance) only needs to include a single bare constructor line somewhere in that file. It looks like a stray expression or a typo. It passes syntax checks. And the next time a maintainer uses Gemini CLI to work on the project, the MCP server will process it without anyone specifically asking it to.

Google marked the issue as fixed and acknowledged the contribution. While the VRP panel determined that the attack scenario did not meet the bar for a financial reward, the product team patched the code via PR #91 (tracked in issue #90).

Beyond the direct fix, the report triggered a broader team initiative: issue #95 — a structured roadmap to systematically hunt for and eliminate prototype pollution across the entire Gemini CLI Security Extension codebase. The initiative laid out a full action plan: curating real-world CVE examples from the OSSF benchmark dataset, baselining the extension's current detection rate against those examples, updating the Gemini analysis prompt to explicitly instruct the model to look for unsafe object merges and direct __proto__/constructor.prototype modifications from user-controlled input, and adding representative test cases to the internal benchmark to prevent regressions. In other words, a single reported bug prompted Google's team to close the gap on an entire vulnerability class in their detection pipeline.

The fix is straightforward and worth highlighting because it illustrates an important defensive coding principle.

Vulnerable code uses a plain JavaScript object as a key-value map:

// BEFORE — vulnerable to prototype pollution

const lineToNumbers: { [key: string]: number[] } = {};

if (!lineToNumbers[trimmedLine]) {

lineToNumbers[trimmedLine] = [];

}

lineToNumbers[trimmedLine].push(i + 1);

Fixed code replaces the plain object with a Map:

// AFTER — immune to prototype pollution

const lineToNumbers = new Map();

if (!lineToNumbers.has(trimmedLine)) {

lineToNumbers.set(trimmedLine, []);

}

lineToNumbers.get(trimmedLine)!.push(i + 1);

Map stores its keys in an internal isolated structure that is completely separate from the JavaScript prototype chain. The string "constructor" is treated as an ordinary key with no special behavior. This one change eliminates the vulnerability entirely.

For developers writing similar lookup patterns in JavaScript or TypeScript, the rule of thumb is: when keys come from user-controlled or external input, always use Map instead of a plain object.

The fix was applied in GitHub PR #91 and tracked via issue #90.

This finding validates something central to what we do at Symbiotic Security: security cannot be bolted on at the end of the development cycle, and the tools developers rely on to enforce security are not exempt from scrutiny.

The core challenge in modern development is not a lack of security tooling, it is that most security feedback arrives too late, after code has already been written, reviewed, and shipped. Symbiotic Security's approach addresses this by embedding detection and remediation at the pre-commit stage, directly inside the developer's workflow, with AI guardrails aligned to each organization's security policy. The result, across teams using our products, is 63% fewer vulnerabilities and 2.4× more compliant commits, not because developers become perfect, but because risks are caught and fixed before they accumulate.

Our detection of this prototype pollution pattern during a routine research sweep is a direct exercise of those same capabilities. The vulnerable code lived inside a security extension, which makes the stakes higher, not lower: developers extend more trust to tools explicitly designed to protect them. The Gemini CLI Security Extension is used at code review time, the exact moment when a developer's guard is slightly down because they are relying on automation. An attacker who understands this workflow has a high-value target.

This is also a clear example of why AI-generated and AI-assisted code requires the same, and sometimes more rigorous, security scrutiny as human-written code. Hybrid coding teams that don't adapt their security posture to match their velocity are accepting compounding risk. That is the problem Symbiotic Security exists to solve.

We will continue publishing research like this as part of our commitment to transparency and to the broader security community. If you want to understand how our tooling detects vulnerability classes like this one, or what patterns look like in your own codebase, reach out to us.

If you use the Gemini CLI Security Extension, update to the latest version, which contains the fix applied after this report.

More broadly:

Map or Object.create(null).